Practical Applications of Neuromorphic Computing for Developers

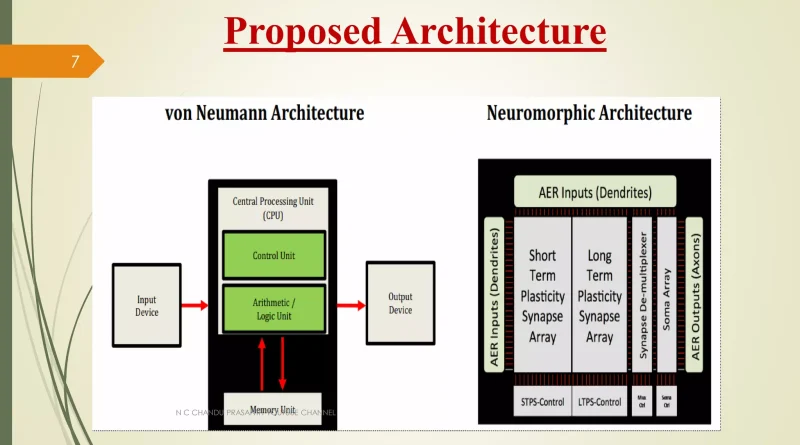

Let’s be honest—most of us in software development are working with a model of computing that’s, well, decades old. The von Neumann architecture, with its separate CPU and memory, is brilliant. But it’s starting to creak under the weight of modern AI workloads. The constant shuttling of data back and forth? It’s a traffic jam on the silicon highway.

That’s where neuromorphic computing swerves in. It’s not just another chip. It’s a fundamentally different way of thinking about computation, inspired by the human brain’s own wetware. Instead of binary on/off gates, it uses artificial neurons and synapses that fire sparsely and process information in parallel. The result? Mind-boggling efficiency gains for specific tasks.

For developers, this isn’t just academic. It’s a toolkit for solving problems that are currently too slow, too power-hungry, or just plain impossible. Here’s the deal: let’s dive into the practical, tangible applications you can start building toward.

Where Neuromorphic Hardware Shines (And Where It Doesn’t)

First, a quick reality check. You won’t be writing a standard Python web server for a neuromorphic chip. The programming model is different—often involving spiking neural networks (SNNs). Think of it as moving from imperative programming to a more event-driven, data-flow paradigm. It’s a shift, sure. But the payoff in certain domains is immense.

1. Real-Time Sensor Processing at the Edge

This is the killer app, honestly. Imagine a security camera that only wakes up the main system when it actually sees a person, not a swaying tree. Or a vibration sensor on a factory machine that can predict a bearing failure from a subtle, changing pattern.

Neuromorphic chips, like Intel’s Loihi or BrainChip’s Akida, consume microwatts of power. They’re built for this. You can deploy them in remote, battery-powered devices where they process continuous streams of sensor data—audio, video, lidar, you name it—locally and in real time. No cloud latency, no privacy concerns from streaming everything. The development workflow here involves training SNNs to recognize temporal patterns, which are, you know, the bread and butter of sensory data.

2. Next-Level Always-On Voice Interfaces

We’ve all yelled at a smart speaker that fails to wake up. Current keyword spotting drains battery because the processor is constantly doing heavy-duty digital signal processing. A neuromorphic approach is different. It can be designed to listen for a specific wake-word with the efficiency of a biological ear—only “spiking” when the right pattern hits.

For developers, this means creating voice interfaces that are more responsive, private (everything stays local), and can run for months on a coin cell. It opens up design space for truly ambient computing in places we can’t stick a power cord.

Getting Your Hands Dirty: Development Frameworks & Tools

Okay, so how do you actually build for this? The ecosystem is young, but it’s growing fast. You’re not coding in assembly for a weird chip. High-level frameworks are emerging.

- Intel Lava: An open-source framework for developing neuro-inspired applications. It’s framework-agnostic, meaning you can potentially port SNNs across different neuromorphic hardware backends. A great place to start experimenting.

- NEST or Brian: These are more for simulation and research, but crucial for understanding SNN dynamics before deploying to hardware.

- SynSense Speck: A complete development kit with a low-power neuromorphic sensor. It’s literally made for building applications in edge vision and audio.

The workflow often looks like this: design and train a network (sometimes converting a traditional ANN to an SNN), simulate it extensively, then deploy to the target hardware. It’s a blend of machine learning and embedded systems development.

A Practical Comparison: Traditional vs. Neuromorphic Approach

| Application | Traditional CPU/GPU Approach | Neuromorphic Approach |

|---|---|---|

| Always-On Object Detection | High power draw; constant video encoding/analysis; significant heat. | Ultra-low power; only processes “interesting” motion/edges; runs cool. |

| Adaptive Robotic Control | Pre-programmed responses; struggles with unpredictable environments. | Can learn and adapt in real-time from sensor feedback; handles uncertainty better. |

| Complex Pattern Recognition in Time-Series Data | Requires large data windows, heavy computation for RNNs/LSTMs. | Excels at finding temporal patterns with inherent memory; highly efficient. |

Overcoming the Learning Curve

Look, the biggest barrier right now is conceptual. We think in loops and functions, not in spikes and synaptic weights. My advice? Start small. Pick a framework like Lava and run a tutorial on simulating a simple network that recognizes a temporal pattern—like Morse code or a specific gesture sequence.

Focus on problems where the four key neuromorphic computing advantages matter:

- Extreme Energy Efficiency (Think battery or solar-powered for years).

- Real-Time, Low-Latency Processing (No time to send data to the cloud).

- Inherent Adaptability & Learning (Systems that need to adjust on the fly).

- Handling Sparse, Event-Based Data (Like signals from event-based cameras).

If your app doesn’t tick at least one of those boxes, a standard microcontroller might still be the right tool. And that’s fine! This is about using the right tool for the job.

The Road Ahead: More Than Just a Niche

It’s easy to see neuromorphic computing as a specialist corner of AI hardware. But the trends—the explosion of IoT, the need for sustainable tech, the demand for real-time intelligence—are all pushing in its direction. The developer who understands how to build efficient, event-driven intelligent systems at the edge will have a serious advantage.

We’re not at the “write once, run anywhere” stage yet. The hardware is still diverse. But the foundational concepts are crystallizing. The code you write for a spiking neural network today is an investment in a computing paradigm that looks less like a 1940s calculator and more like… well, like the most powerful computer we know of: the brain.

So maybe don’t rewrite your entire stack tomorrow. But tinker. Simulate. Get a feel for the event-driven rhythm of spikes instead of the relentless clock cycle. The future of computing isn’t just faster—it’s smarter, and quieter, listening to the world in a whole new way.