Software Strategies for the Spatial Computing and Mixed Reality Ecosystem

Let’s be honest—the hardware gets all the glory. Sleek headsets, futuristic glasses, the whole “wow” factor. But you know what? The real magic, the thing that will make or break this whole spatial computing and mixed reality (MR) revolution, is the software. It’s the invisible architecture, the code that turns a fancy wearable into a useful tool or a captivating world.

Developing for this ecosystem isn’t just mobile or desktop development in 3D. It’s a fundamentally different beast. The strategies that worked before? They need a serious rethink. Here’s the deal: we need to talk about the software strategies that will actually work in this blended space between our physical and digital lives.

Foundational Shift: From Screens to Spaces

First, you have to internalize the core shift. We’re moving from designing for screens to designing for spaces. Your user isn’t just clicking; they’re looking, gesturing, moving, and speaking. The software has to be context-aware, responding not just to user input but to the room, the objects in it, and even the user’s own body.

This means your spatial computing software architecture needs a few non-negotiable layers:

- Environmental Understanding: Can your app recognize a wall, a table, or the floor? Use that surface for display or interaction.

- Persistent Anchoring: That virtual monitor needs to stay on your real desk when you walk away and come back. Persistence is key for utility.

- Multi-modal Input: Relying solely on hand-tracking or a single controller is a gamble. The best apps blend gaze, gesture, voice, and even traditional input seamlessly. It’s about offering choices.

Core Development Strategies to Embrace

1. Platform-Agnostic Where Possible, Native Where Necessary

Fragmentation is already here. You’ve got Apple Vision Pro, Meta Quest, Microsoft HoloLens, and others—all with different OS cores and capabilities. Locking yourself to one is risky. Honestly, the smart play is to use cross-platform engines like Unity or Unreal Engine for the core experience. They handle the brutal complexity of 3D rendering and spatial mapping.

But—and this is a big but—don’t be afraid to go native for the killer features. If a platform’s unique sensor or co-processor enables a dramatically better interaction, use it. Your strategy should be a hybrid: a portable core with specialized, high-impact plugins.

2. Design for “Micro-Interactions” and Ergonomics

In mixed reality, fatigue is a real design constraint. An app that requires constant, wide arm gestures is a nightmare. The best MR user experience design focuses on micro-interactions: subtle pinch, a glance-based selection, a quick voice command.

Think about “rest states.” Where do the UI elements live when not in use? Can they fade into the periphery? Software must be ergonomic, not just visually cool. It’s a dance between presence and comfort.

3. Prioritize Shared Experiences and the “Network Effect”

Spatial computing’s biggest pitfall could be isolation. The most compelling software strategies actively fight this. This means building in collaboration from the ground up. Think about:

- Multi-user persistence: A 3D model you and a remote colleague are editing stays synced in the same real-world spot.

- Asynchronous annotations: Leaving a voice note or a 3D arrow pinned to a machine for the next shift worker.

- Data interoperability: Can your MR visualization tool import from common CAD or BIM formats? It has to.

The value multiplies when experiences are shared. That’s the network effect, and it’s a powerful driver for adoption in enterprise mixed reality solutions and beyond.

The Toolbox: Frameworks and Considerations

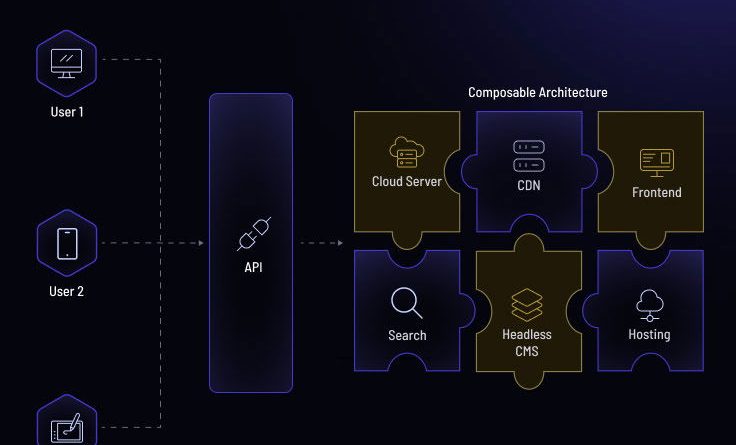

Okay, so how do you actually build this stuff? Well, your toolkit is evolving fast. Beyond the major game engines, you have platform-specific SDKs (like VisionOS’s RealityKit or Meta’s Presence Platform). But the rising stars are frameworks that abstract some of the spatial complexity.

| Focus Area | Key Tools & Frameworks | Why It Matters |

| 3D Content & Rendering | Unity, Unreal Engine, Babylon.js | Handles the heavy lifting of graphics, physics, and asset management. |

| Spatial Mapping & Understanding | ARKit, ARCore, OpenXR | Provides the “bridge” to understand the real world through the device’s sensors. |

| Multi-user & Cloud | Azure Spatial Anchors, AWS IoT TwinMaker, Normcore | Enables shared, persistent experiences across devices and locations. |

| UI/UX for Spatial | Shader Graph, MRTK (Mixed Reality Toolkit), custom shaders | Creates interfaces that feel part of the world, not floating screens. |

Remember, your choice here dictates your reach and your development speed. Picking a stack is a strategic decision, not just a technical one.

Overcoming the Inevitable Hurdles

It won’t all be smooth sailing. Here are a couple of big, gnarly challenges you’ll face—and how to think about them.

Challenge 1: The “Empty Room” Problem. A user puts on a headset in their living room and sees… nothing. Your app has to provide immediate value or guidance. Onboarding is everything. Use playful tutorials that teach interaction in-context. Seed the environment with something interesting right away.

Challenge 2: Performance is King (and Queen). In VR, low frame rates cause nausea. In MR, they break the fragile illusion of blending realities. Every polygon, every texture, every script counts. The strategy? Aggressive optimization and level-of-detail (LOD) systems that are more aggressive than you’d ever need on a PC. Prioritize a rock-solid 90fps over visual fidelity every time.

Where This is All Heading: The Invisible Interface

So, what’s the endgame for software in this ecosystem? In my view, it’s the invisible interface. The most powerful spatial computing application won’t feel like an “app” at all. It’ll feel like a natural extension of your intent. You’ll glance at a broken engine part, and a schematic will simply appear, layered over reality. You’ll think about a data set, and it will materialize in the air, already formatted for discussion.

Getting there requires software that is patient, contextual, and astonishingly clever. It requires strategies built not on dominating the user’s field of view, but on enhancing their perception of the world already there. The winning software won’t shout for attention. It’ll whisper exactly what you need to know, right where and when you need it.

That’s the real strategy, isn’t it? Building not for the device, but for the human experience it enables. The rest is just code.